Docker offers native tooling for Windows and Mac OSX using their recently acquired Hyper-V virtualization technology. For several reasons we still prefer a standardised docker-engine set up using docker-machine and Oracle’s VirtualBox technology.

In addition, we have developed docker-workbench, a custom GO utility that helps standardise the creation of the docker engine and its configuration for use across a team of developers, engaged in multiple web projects, in heterogeneous environments; Windows 7-10, OSX and Linux.

The primary advantages of docker-workbench over native tooling boil down to:

- project specific docker-compose configs that can be versioned and used by all developers on the team, regardless of OS or directory set up

- elegant management of hosts/DNS and ports for applications

- allow mobile, tablet and other network devices to easily access the containerised applications

But this approach assumes:

- apps use HTTP traffic on ports 80/443

- project folder name holding the docker-compose config matches the hostname used in the URL for testing the app

Things get more complex really quickly once you take those simple assumptions away.

Install Pre-Requisites

Detailed installation instructions are available here, if my high speed OSX brew based walkthrough doesn’t suffice:

TLDR; summary:

- install Oracle VirtualBox; most ubiquitous virtual machine environment

- install Docker Machine; Docker tool for managing the installation of Docker engines

- install Docker Client; the CLI you use to interact with Docker engines

- install Docker Compose; the CLI to assist with application composition

- install GO language and set GOPATH

- install

docker-workbenchutility

With Homebrew on OSX I ran through the following set up:

brew cask install virtualbox

brew install docker-machine

brew install docker

brew install docker-compose

brew install go

go get -u github.com/justincarter/docker-workbench

Note, you may have several of these installed already; they’re very common tools. Sometimes folks get tripped up getting Go Lang installed correctly; maybe by forgetting the GOPATH. Just follow the brew hints carefully.

Creating a Workbench

Quick check to see all is well.

$ docker-workbench

docker-workbench v1.5

Provision a Docker Workbench for use with docker-machine and docker-compose

Usage:

docker-workbench [options] COMMAND

Options:

--help, -h show help

--version, -v print the version

Commands:

create Create a new workbench machine in the current directory

up Start the workbench machine and show details

proxy Start a reverse proxy to the app in the current directory

help Shows a list of commands or help for one command

Run 'docker-workbench help COMMAND' for more information on a command.

Setting up a “workbench” entails using the docker-workbench utility to create a Docker engine  with a bunch of helpful settings.

with a bunch of helpful settings. docker-workbench does some self-configuration based on your preferred directory structure for managing projects.

A “workbench” is created in the context of the working directory from which the docker-workbench create command is run. For example, on my laptop I keep all my code projects in a folder called ~/code and running docker-workbench create spins up a docker-engine called code:

$ cd ~/code

$ docker-workbench create

Running pre-create checks...

Creating machine...

(code) Copying /Users/modius/.docker/machine/cache/boot2docker.iso to /Users/modius/.docker/machine/machines/bingo-workbench/boot2docker.iso...

(code) Creating VirtualBox VM...

...>>> provisioning guff... yadda...yadda...yadda...>>>

...>>> more on this later...

$ docker-machine ls

NAME ACTIVE DRIVER STATE URL SWARM DOCKER ERRORS

code * virtualbox Running tcp://192.168.99.100:2376 v17.04.0-ce

Some folks in the office run a lot of different projects, and prefer to group all the apps of a specific type or for a specific client into their own “workbench”.

For example, you might want a separate workbench for each client:

/Users/modius/projects

├── aoc <--- client

│ ├── rio2016 <--- client projects

│ └── sochi2014

├── bingo

├── bng

└── daemon

So you’d spin up an aoc specific workbench like this:

$ cd ~/projects/aoc

$ docker-workbench create

docker-workbench helps to standardise a specific config and provisioning process for docker-machine create. You can have one or many VMs; whatever floats your boat

Workbench provisioning

TLDR; summary

- create a

boot2dockerbased docker-engine usingdocker-machinewith intelligent defaults - download and install a specially customised reverse proxy

- create

/workbenchshare to working directory

If we look a bit more closely at the docker-workbench create output there are a few critical pieces to the creation and provisioning process.

Docker engine

Provisioning with boot2docker...

Copying certs to the local machine directory...

Copying certs to the remote machine...

Setting Docker configuration on the remote daemon...

Checking connection to Docker...

Docker is up and running!

docker-workbench uses the native docker-machine tooling to create a VirtualBox VM using Boot2Docker with a bunch of settings that make sense for general development. You can change these settings via the VirtualBox UI as needed.

Workbench proxy

Installing Docker Workbench Proxy...

Unable to find image 'justincarter/docker-workbench-proxy:latest' locally

latest: Pulling from justincarter/docker-workbench-proxy

Part of the docker-workbench toolkit is a specially modified NGINX proxy container that is automatically spun up to route HTTP traffic to your web apps. More on this awesome sauce  below.

below.

Universal shared folder

Stopping "projects"...

Machine "projects" was stopped.

Adding /workbench shared folder...

Starting "projects"...

The working directory is automatically configured as a shared folder inside the VM that is always named /workbench. This step is critical, as it means you can commit and share docker-compose.yml files with VOLUMEs easily between team members.

If all is well you should be able to talk to the docker engine just as you would any other, using the docker client and see the sweet

docker_workbench_proxy quietly running in the background:

$ eval "$(docker-machine env code)"

$ docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

0ef5a154ab95 justincarter/docker-workbench-proxy "/app/docker-entry..." 3 days ago Up 3 days 0.0.0.0:80->80/tcp, 443/tcp docker_workbench_proxy

You can tap out whatever Docker incantation you like – it’s a normal everyday docker engine.

Getting a Project Started

The “workbench” directory is designed to contain multiple applications, each application housed in its own sub-directory (typically cloned from a Git respository).

Let’s assemble a simple web app using Lucee 5.1 and NGINX and put it in a sub-directory called ./lucee-app:

mkdir lucee-app

cd lucee-app

touch docker-compose.yml

mkdir www

echo "<h1>hello world</h1>" > www/index.cfm

So we end up with something like:

lucee-app

├── docker-compose.yml

└── www

└── index.cfm

The workbench proxy is configured to automatically route traffic to any containers that listen on port 80 and are configured with a VIRTUAL_HOST environment variable nominating the host name (wildcards allowed) the application responds to.

If we’re consistent in keeping the folder name and host headers the same, configuration becomes obvious. Let’s update the docker-compose.yml file for “lucee-app” to look like this:

lucee-app:

image: lucee/lucee51-nginx

environment:

- "VIRTUAL_HOST=lucee-app.*"

volumes:

- "/workbench/lucee-app/www:/var/www"

Note, the consistent naming:

-

./lucee-appis the directory name of the application -

lucee-app: matches the service name at the top of the

.ymlfile - the environment variable VIRTUAL_HOST wildcard prefix

- the path used in the volume which maps the “www” folder into the container

You start the day with docker-workbench up in the project directory; analogous to docker-compose up. This ensures the “workbench” is running and returns some useful info about our little project.

$ cd lucee-app

$ docker-workbench up

Starting "code"...

Machine "code" is already running.

Run the following command to set this machine as your default:

eval "$(docker-machine env code)"

Start the application:

docker-compose up

Browse the workbench using:

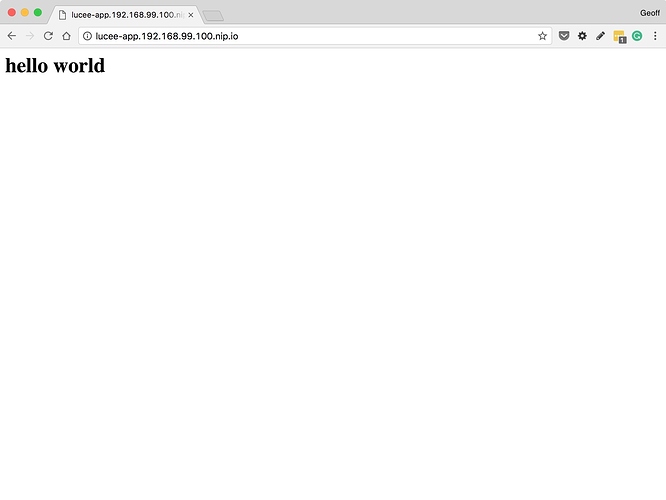

http://lucee-app.192.168.99.100.nip.io/

If you haven’t already, set the docker engine as the default:

eval "$(docker-machine env code)"

This is standard fare for docker tooling and sets environment variables that allow you to work with docker, docker-machine and docker-compose.

The “workbench” is also suggesting URL that the application will be available on when it is running:

The URL is made up of the value supplied in the VIRTUAL_HOST which must match the directory of the application (“lucee-app”), the IP address of the “workbench” VM (assigned by VirtualBox using DHCP from the Docker Machine network adapter), and “.nip.io” which is a wildcard DNS service that resolves names to their matching IP addresses. This means we do not have to manage our own DNS or hosts files or bother with unique, difficult to remember port numbers for each application.

this

this

is awesome.

is awesome.

Finally, start the application using standard Docker Compose tooling and use the URL to browse the app via the proxy:

$ docker-compose up

...snip...8<...snip...8<...snip...8<

lucee-app_1 | ===================================================================

lucee-app_1 | WEB CONTEXT (cbe856ff790c9ba5208811309bdf168b)

lucee-app_1 | -------------------------------------------------------------------

lucee-app_1 | - config:/opt/lucee/web (custom setting)

lucee-app_1 | - webroot:/var/www/

lucee-app_1 | - hash:cbe856ff790c9ba5208811309bdf168b

lucee-app_1 | - label:cbe856ff790c9ba5208811309bdf168b

lucee-app_1 | ===================================================================

lucee-app_1 |

lucee-app_1 | 2017-04-26 13:33:07.929 fixed LFI

lucee-app_1 | 2017-04-26 13:33:07.930 fixed salt

lucee-app_1 | 2017-04-26 13:33:07.934 fixed S3

lucee-app_1 | 2017-04-26 13:33:07.934 fixed PSQ

lucee-app_1 | 2017-04-26 13:33:07.934 fixed logging

lucee-app_1 | 2017-04-26 13:33:07.937 fixed old extension location

lucee-app_1 | 2017-04-26 13:33:07.937 fixed to big felix.log

lucee-app_1 | 2017-04-26 13:33:07.938 deploy web context

lucee-app_1 | 2017-04-26 13:33:07.941 loaded config

lucee-app_1 | 2017-04-26 13:33:07.943 loaded constants

lucee-app_1 | 2017-04-26 13:33:07.947 loaded loggers

lucee-app_1 | 2017-04-26 13:33:07.947 loaded temp dir

lucee-app_1 | 2017-04-26 13:33:07.948 loaded id

lucee-app_1 | 2017-04-26 13:33:07.948 loaded version

lucee-app_1 | 2017-04-26 13:33:07.949 loaded security

lucee-app_1 | 2017-04-26 13:33:07.949 loaded lib

lucee-app_1 | 26-Apr-2017 13:33:08.019 INFO [main] org.apache.coyote.AbstractProtocol.start Starting ProtocolHandler ["http-apr-8888"]

lucee-app_1 | 26-Apr-2017 13:33:08.026 INFO [main] org.apache.coyote.AbstractProtocol.start Starting ProtocolHandler ["ajp-apr-8009"]

And voila!

The upshot

Run as many apps you like simultaneously across your team; no PORT conflicts, no VOLUME adjustments, no mucking around. Any containerised web application that listens on port 80 should be able to work with the Daemon Docker Workbench.

Just wonderful